AI Guardrails: The Essential Security Layer for Modern AI Systems

Would you trust an AI assistant with access to your company’s confidential emails, customer databases, and financial records? If you’re deploying AI systems in production, you already are and recent security breaches show exactly what can go wrong when AI systems lack proper guardrails.

The Problem: When AI Safety Fails

Recent months have witnessed a series of high-profile AI security incidents that reveal the urgent need for robust protection mechanisms:

EchoLeak: The Zero-Click Attack – Attackers embedded malicious prompts in emails using sophisticated character substitution techniques. Microsoft 365 Copilot automatically processed these poisoned emails, exfiltrating sensitive data from OneDrive, SharePoint, and Teams without any user interaction required. The attack moved through approved channels with no visibility at the application or identity layers. Read more →

The Drift-Salesforce Supply Chain Breach – Threat actors compromised OAuth tokens from Drift’s Salesforce integration, gaining access to over 700 customer environments. The breach cost an estimated $200 million and affected organizations worldwide. Traditional security monitoring missed it because the activity appeared legitimate it came through trusted third-party connections. Read more →

Amazon Q Developer Compromise – A hacker planted prompt injection code in the official VS Code extension for Amazon’s AI coding assistant. The compromised version, which passed Amazon’s verification, could have wiped users’ local files and disrupted their AWS infrastructure. It remained publicly available for two days. Read more →

The Arup Deepfake Fraud – An engineering firm lost $25 million when an employee transferred funds after participating in a video conference call populated entirely by AI-generated deepfakes of their CFO and financial controller. Read more →

The statistics are sobering: 87% of enterprises lack comprehensive AI security frameworks, attackers breach AI systems in an average of 51 seconds, and shadow AI incidents cost $670,000 more than traditional breaches. [Sources: Gartner, CrowdStrike]

What Are AI Guardrails?

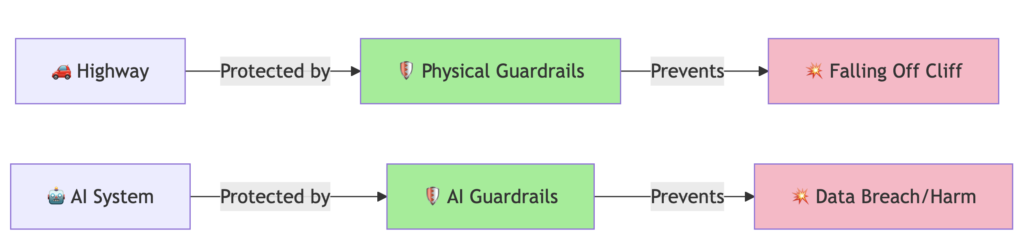

AI guardrails are validation and control layers that establish boundaries for AI system behavior, ensuring outputs remain safe, compliant, and aligned with organizational policies. Think of them like highway guardrails ,they don’t stop you from driving, but they prevent catastrophic failures when things go wrong.

Unlike traditional software that follows deterministic rules (same input → same output), AI systems are probabilistic ,the same input can produce different outputs. This unpredictability creates unique security challenges that traditional perimeter defenses can’t address.

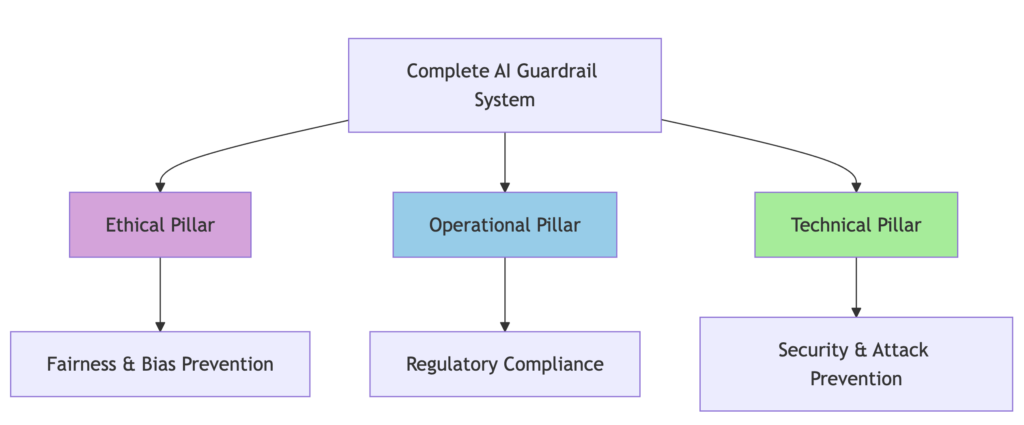

The Three Pillars of AI Guardrails

Ethical Guardrails ensure fairness and prevent bias. For example, preventing hiring AI from favoring candidates based on gender or ethnicity by monitoring outputs for demographic patterns.

Operational Guardrails handle regulatory compliance: GDPR, HIPAA, EU AI Act, ISO 42001. They log every AI decision, flag high-risk transactions, and maintain complete audit trails.

Technical Guardrails prevent harmful outputs through real-time content filtering, anomaly detection, and attack pattern recognition.

All three pillars must work together. Skip one, and you’re vulnerable.

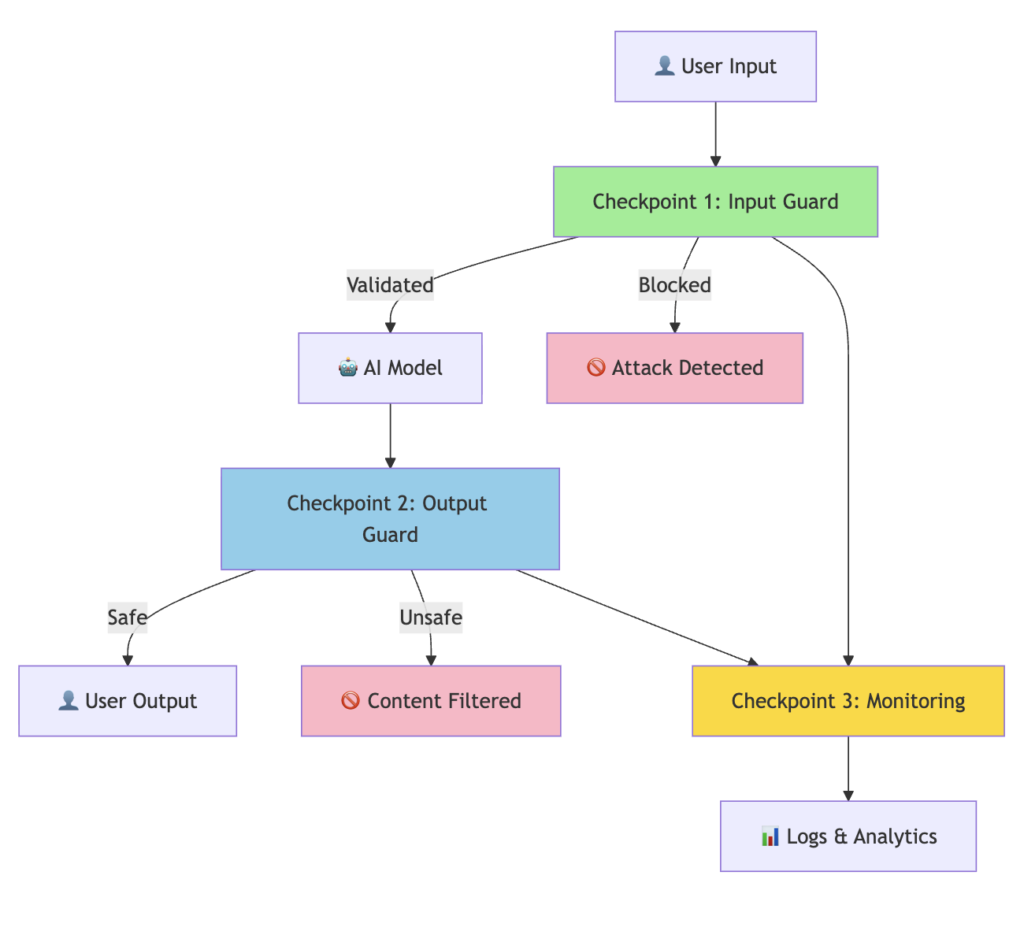

How Guardrails Work: The Three-Checkpoint System

Checkpoint 1: Input Validation scans user prompts before they reach the AI, detecting prompt injection (“Ignore all previous instructions…”), jailbreak attempts, and encoded attacks. Research shows 20% of jailbreaks succeed in an average of 42 seconds without proper input guards. [Source: Pillar Security]

Checkpoint 2: Output Filtering examines AI responses before delivery, catching PII (emails, SSNs, credit cards), toxic language, hallucinations, and data leakage. Recent studies found 90% of successful AI attacks leak sensitive data. [Source: Pillar Security]

Checkpoint 3: Continuous Monitoring tracks violation trends, attack patterns, and model drift. It enables real-time alerts when anomalies occur like 50 jailbreak attempts from a single IP in one hour.

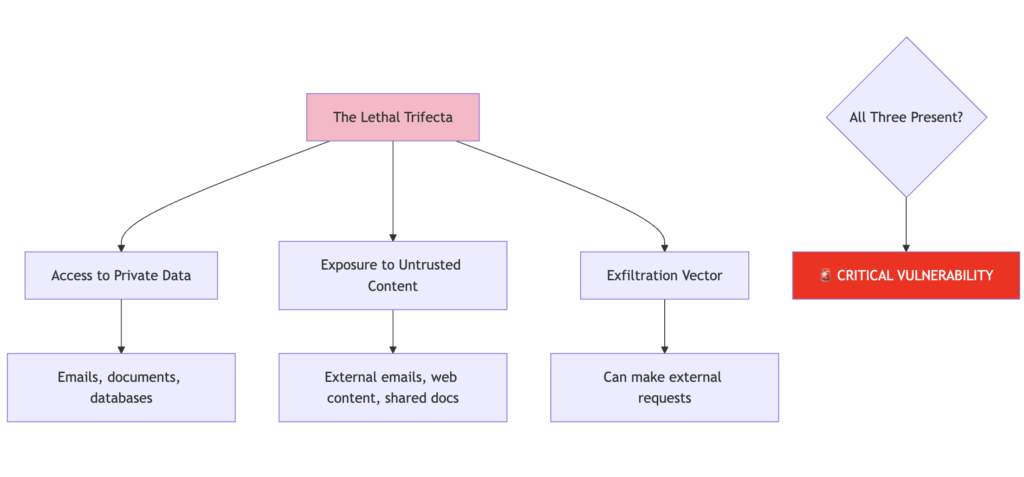

The Lethal Trifecta: When AI Becomes Critically Vulnerable

Security researcher Simon Willison identified three conditions that make AI systems critically vulnerable:

If your AI system has all three, it’s vulnerable. Period. Both the EchoLeak and Amazon Q incidents fell within this framework. Read more →

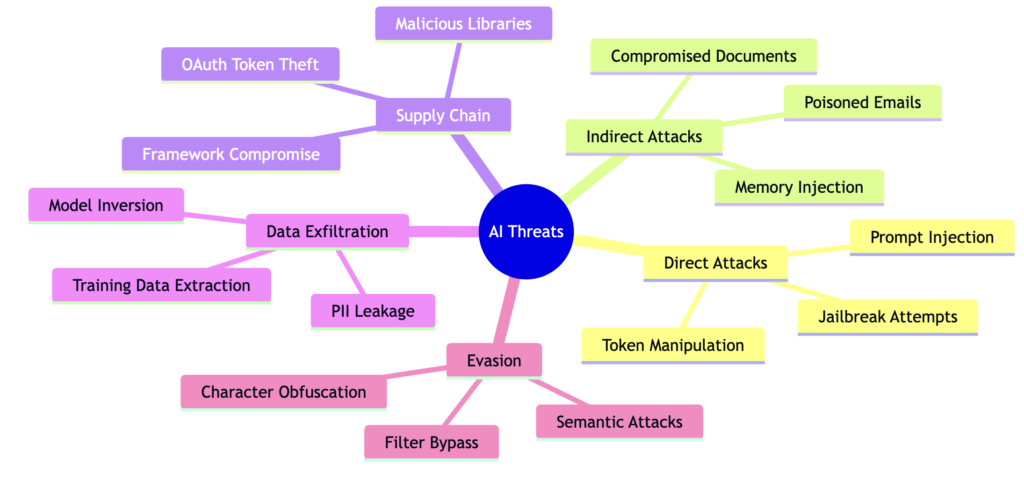

Understanding the Attack Landscape

The OWASP Top 10 for LLM Applications ranks prompt injection as the #1 threat. Unlike traditional SQL injection with recognizable signatures, prompt injections operate at the semantic layer they’re attacks through meaning, not code. [Source: OWASP]

Top Frameworks and Tools

Guardrails AI – Open-source Python framework with 100+ pre-built validators covering toxic language, PII detection, hallucination prevention, and more. Easy to implement and highly customizable. Visit →

from guardrails import Guard

from guardrails.hub import ToxicLanguage, DetectPII

guard = Guard().use_many(

ToxicLanguage(threshold=0.5),

DetectPII(["EMAIL", "PHONE", "SSN"])

)

guard.validate("Contact me at john@email.com")

# Result: 🚫 PII detected - BLOCKED

NVIDIA NeMo Guardrails – Enterprise-grade toolkit with GPU acceleration achieving sub-50ms latency. Uses domain-specific “Colang” language for dialogue control. Ideal for high-throughput applications like real-time customer service. Visit →

AWS Bedrock Guardrails – Cloud-native multimodal protection blocking up to 88% of harmful content across text and images. Single policy protects all models in your Bedrock account. Seamless AWS integration. Visit →

Robust Intelligence AI Firewall – Enterprise security platform mapping directly to OWASP Top 10 for LLMs. Auto-profiles models with algorithmic red-teaming. Built for compliance-heavy industries like finance and healthcare.

| Framework | Latency | Cost | Best For |

|---|---|---|---|

| Guardrails AI | 100-200ms | Free/Open Source | Custom logic, startups |

| NVIDIA NeMo | <50ms | Free/Open Source | High-throughput, real-time |

| AWS Bedrock | 100-150ms | Pay-per-use | AWS ecosystem |

| Robust Intelligence | <100ms | Enterprise | Regulated industries |

Metrics That Matter

Effective guardrails balance three critical dimensions:

Risk Mitigation

- Recall (True Positive Rate): >95% target

- Precision: >80% target

- False Positive Rate: <2% target

User Experience

- Latency: <100ms for real-time apps

- Pass-through rate: >98% of legitimate queries

- User satisfaction: Minimal friction

Scalability

- Throughput: 1000+ queries/second for enterprise

- Cost efficiency: <5% overhead on total AI costs

- Infrastructure: Reasonable compute requirements

The Benchmark Paradox

Recent research testing 10 guardrail models across 1,445 prompts revealed a disturbing pattern: benchmark performance doesn’t predict real-world effectiveness.

One model scored 91% on public benchmarks but only 33.8% on novel attacks ,a 57.2 percentage point gap. This suggests benchmark contamination or overfitting. The best-performing model for generalization showed only a 6.5% gap between benchmark and novel attacks. [Source: arXiv Research]

Key insight: Choose guardrails based on generalization ability, not just benchmark scores. Test against novel attacks that mimic real adversarial behavior.

Implementation Best Practices

Start with Risk Assessment – Identify critical use cases, map data sensitivity, define risk thresholds. Don’t try to protect everything at once.

Adopt Least Privilege – Limit AI access to necessary data only. Separate agents by function. Use context separation for different tasks.

Defense in Depth – Multiple guardrail layers using diverse detection methods (rule-based + ML). Redundant validation checks with fail-safe defaults.

Build Observability First – Comprehensive logging, real-time dashboards, automated alerts, forensic analysis capabilities. You can’t protect what you can’t see.

Continuous Improvement – Regular evaluation, attack simulation exercises, benchmark against emerging threats, community threat intelligence.

The Business Case

Costs Without Guardrails:

- Average data breach: $4.44 million [IBM]

- Regulatory fines: Up to 7% of global revenue (EU AI Act)

- Customer churn: 30-40% after major incidents

- Reputation damage: Immeasurable

Benefits With Guardrails:

- 40% faster incident response

- 60% reduction in false positives

- $2.1M average savings per prevented breach

- Compliance achieved, fines avoided

Typical payback period: 6-12 months in enterprise deployments. One prevented breach pays for the entire implementation.

Conclusion

AI guardrails aren’t optional anymore ,they’re foundational infrastructure for responsible AI deployment. Recent security incidents demonstrate that sophisticated attacks are already happening at scale. The question isn’t whether to implement guardrails, but how quickly.

The good news: proven frameworks exist, best practices are documented, and the ROI is clear. Start with your highest-risk use case, measure results, and scale systematically. The cost of prevention is always lower than the cost of breach response.

As AI systems gain more autonomy, access more sensitive data, and make more critical decisions, the attack surface expands. Guardrails that adapt to context, learn from attacks, and enforce policies in real-time are no longer a competitive advantage ,they’re a survival requirement.

Key Resources

Official Documentation:

- Guardrails AI Hub: https://hub.guardrailsai.com

- NVIDIA NeMo: https://developer.nvidia.com/nemo-guardrails

- AWS Bedrock: https://aws.amazon.com/bedrock/guardrails

- OWASP Top 10 LLM: https://owasp.org/www-project-top-10-for-large-language-model-applications/

Security Research:

- Guardrails Index: https://index.guardrailsai.com

- Fiddler Benchmarks: https://www.fiddler.ai/guardrails-benchmarks

Incident Reports:

- Reco AI Cloud Breaches: https://www.reco.ai/blog/ai-and-cloud-security-breaches-2025

- Obsidian Security: https://www.obsidiansecurity.com/blog/prompt-injection

- CSO Online Threats: https://www.csoonline.com/article/4111384/top-5-real-world-ai-security-threats-revealed-in-2025.html

Compliance Frameworks:

- NIST AI RMF: https://www.nist.gov/itl/ai-risk-management-framework

- ISO/IEC 42001: AI Management Systems

Feel free to comment | like | share this article.