Field visit by Raveendiran RR

Date: 2026-02-12

“A human-eye view of CynLr’s Object Intelligence Platform launch in Bengaluru — where robots learn to see like humans.

Cyn:LR just launched a platform that teaches robots to see and learn like a human baby. I was there on Day Zero. Here’s what I saw.

I’ve been to a lot of tech events. Product demos, startup pitches, DevRel meetups. You know the drill slide deck, buzzwords, polite applause. But when I stepped inside CynLr’s Cybernetics H.I.V.E in Bengaluru yesterday, February 12th, something felt immediately different.

There were no slides waiting for me at the entrance. There were robots. Moving, thinking, grabbing. Dozens of them ,robotic arms spread across a 14,000-square-foot floor, engineers huddled over laptops, screens showing simulation environments, and somewhere in the middle of it all, a real car door frame that two robot arms were methodically working on. Together. In real time.

I had blue shoe covers on my feet – mandatory for the lab floor. And I felt, honestly, a little underdressed for the future.

“The last fifty years of robotics were about controlled environments. Programming every movement, every condition, every exception. We built machines that could repeat but not respond. That era is ending.”— Gokul NA,Founder, Cyn;Lr

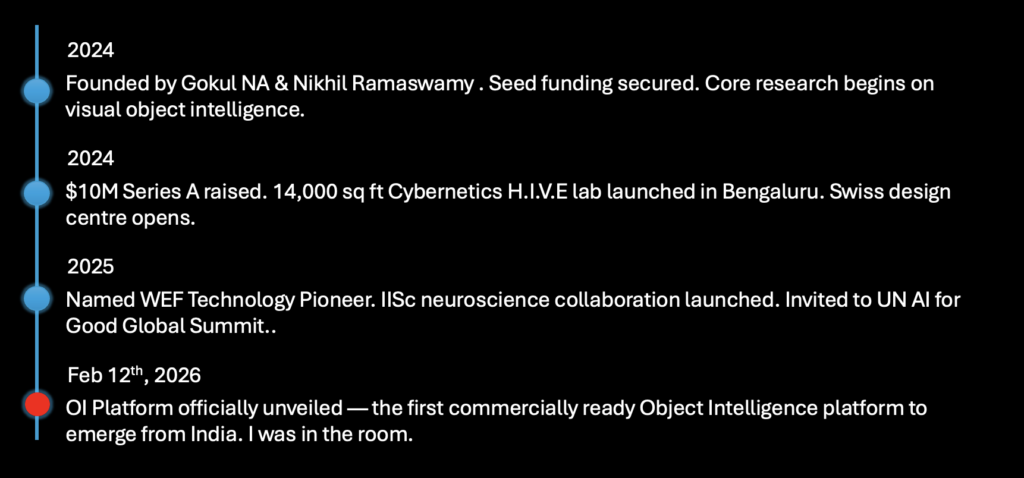

February 12th, 2026 wasn’t just another company visit. This was the day CynLr ;short forCybernetics Laboratories— officially unveiled theirObject Intelligence (OI) Platform to the world. After five years of grinding, researching, and rebuilding, they launched something the global robotics industry has been trying to crack for decades. And they did it from Bengaluru.

So… What Exactly Is CynLr?

If you haven’t heard of CynLr before, that’s kind of the point. They operate quietly. No flashy social media presence, no endless keynotes. Just deep, patient R&D. Founded in 2024 by Gokul NA and Nikhil Ramaswamy — two former Application Engineers from National Instruments who spent years obsessing over one question:Why can’t robots see the way humans do?

The short answer to their question:because we’ve been building the wrong thing.Traditional robots are programmed like calculators that give them a specific input, get a specific output, forever. That works fine in a controlled car factory in 1985 where the same bolt goes in the same hole every six seconds. It breaks completely the moment anything changes a different part, a reflective surface, an object that arrived upside-down.

CynLr’s insight was to go back to biology specifically, to study how the human brain handles vision. (Fun fact: more than 55% of your brain is busy processing visual data at any given moment.) They’ve now formalized this in a research partnership with IISc (Indian Institute of Science), under a program called “Visual Neuroscience for Cybernetics.”

CynLr’s Object Intelligence is already being tested where it matters most in automotive assembly lines handling irregular parts, electronics manufacturing with reflective components, and logistics warehouses sorting unknown objects. One robot, trained once, adapting forever. No retooling. No downtime.

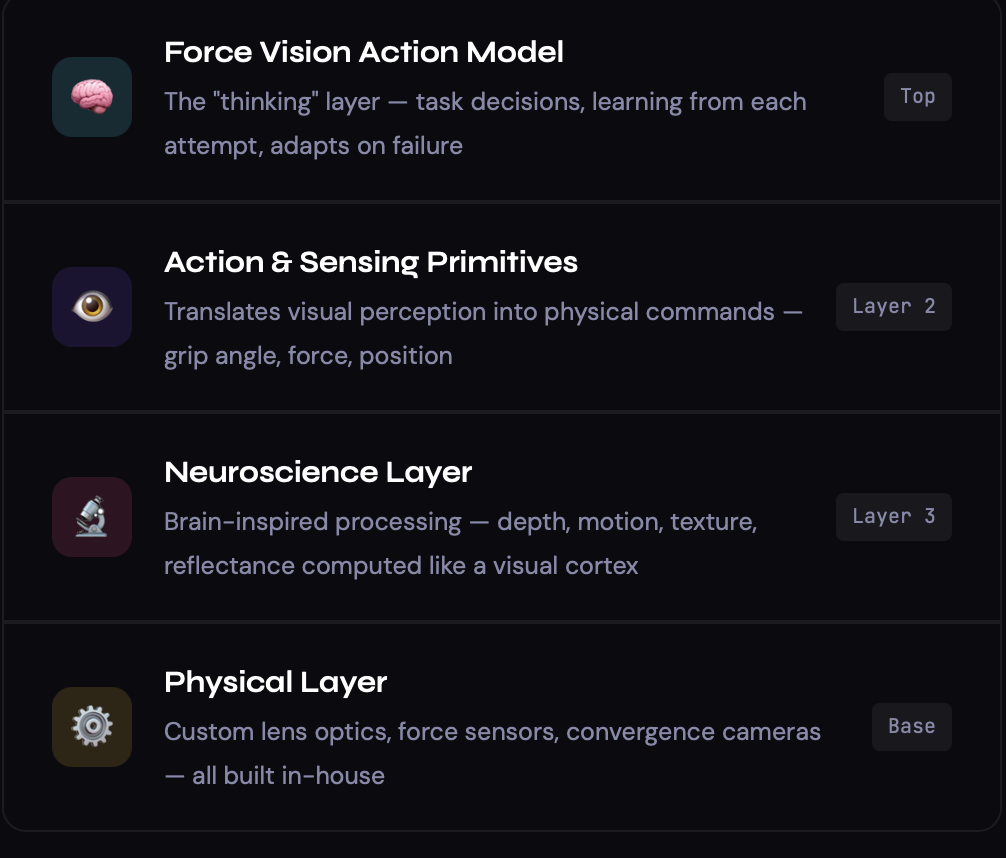

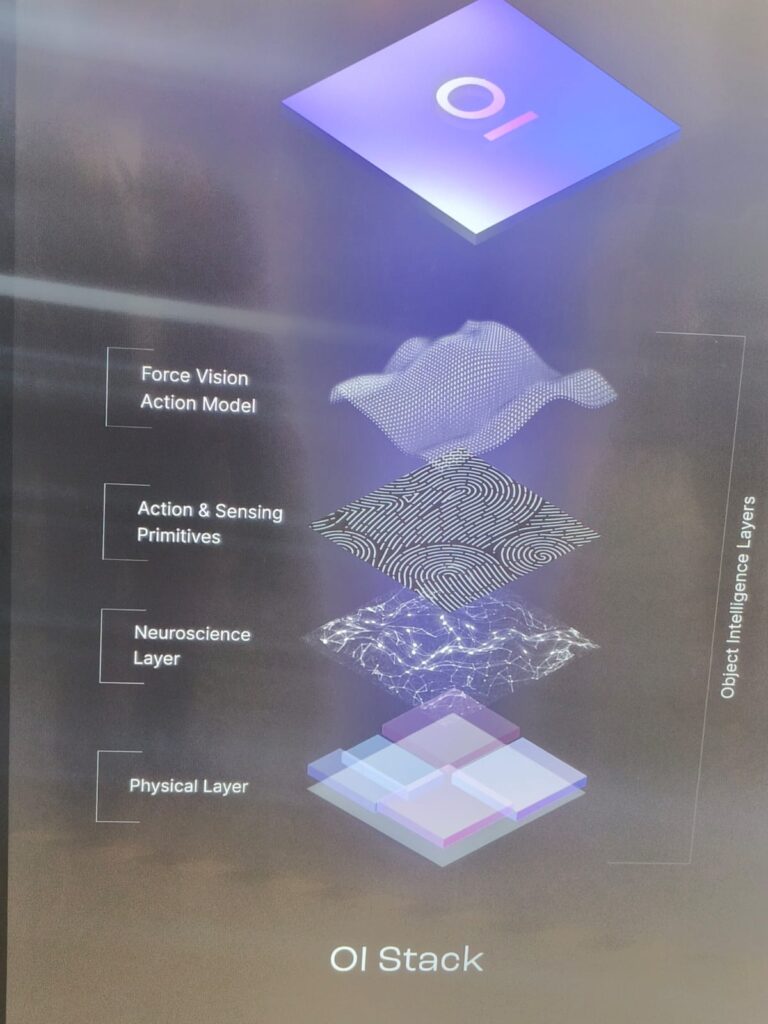

The OI Stack — How It Actually Works

At the heart of everything CynLr builds is theOI Stack with four layers of intelligence that together give a robot genuine perception. I saw the diagram on the displays throughout the lab. Let me break it down in human language, from top to bottom:

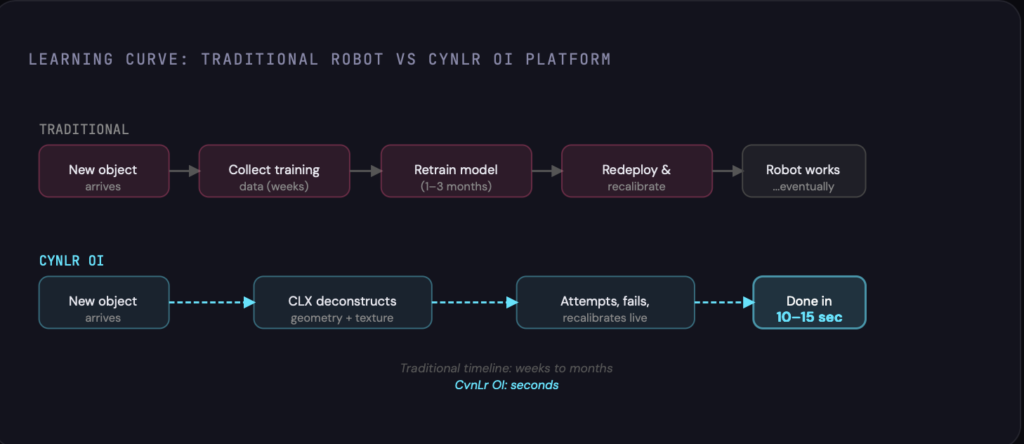

The magic lives in a device called the CLX : CynLr’s vision module that mounts on top of robotic arms. When a CLX-equipped robot encounters an object it has never seen before, it doesn’t freeze up. It deconstructs the object in real time — reading its geometry, texture, reflectance, and possible grip points and then attempts to pick it up. If it fails, it recalibrates instantly. It learns from failure. Within 10 to 15 seconds, it’s usually got the object figured out.

Compare that to traditional robot retraining, which can take weeks to months. That’s not a marginal improvement. That’s a fundamentally different approach to the problem.

What I Actually Saw on the Floor

Words can only do so much. So let me walk you through what was in front of me, station by station.

🤖 The Multi-Arm Stations

Multiple setups featured dual and triple robotic arms — deep navy blue bodies with that characteristic teal ring lighting at each joint — working in concert. One station had a team training their arms to cooperate on a shared task, the simulation playing out on two side-by-side monitors showing a 3D checkerboard environment. When the real arm moved, its digital twin moved in perfect synchrony. Watching an engineer nudge code on a laptop while a robotic arm four feet away instantly adjusted its grip was surreal.

🚗 The Automotive Demo

This one stopped me cold. An actual car door frame was mounted on a rig, and two white robotic arms were working inside it with coordinated precision. This is exactly the kind of task that car manufacturers lose millions trying to automate not because robot arms lack strength, but because they lack the contextual judgment to work in a tight, variable, real-world space. Cyn;Lr is working with Denso and General Motors as pilot customers already. The car door on that rig wasn’t hypothetical. It was proof.

What made this demo particularly striking was the robot’s ability to handle the car door’s unpredictable geometry with curved edges, hollow cavities, varying surface reflectance, without any prior training on that specific part. It figured out the grip in real time. That is the whole thesis, right there in one demo.

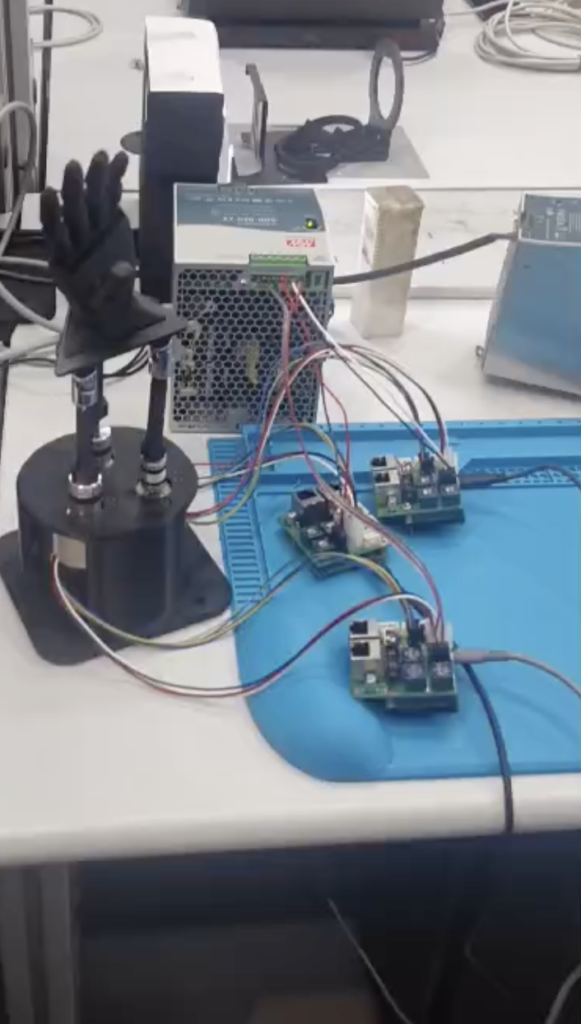

🔭 The CLX Vision Modules — Deconstructed

One of the most fascinating displays was a glass table showing the CLX camera module completely taken apart with every sensor, lens, PCB, frame, and actuator laid out neatly beside a tablet showing what the assembled device looks like in operation. Three generations of the vision module were displayed side by side: you could literally see how the design evolved, got denser, got smarter. It was equal parts art installation and engineering showcase.

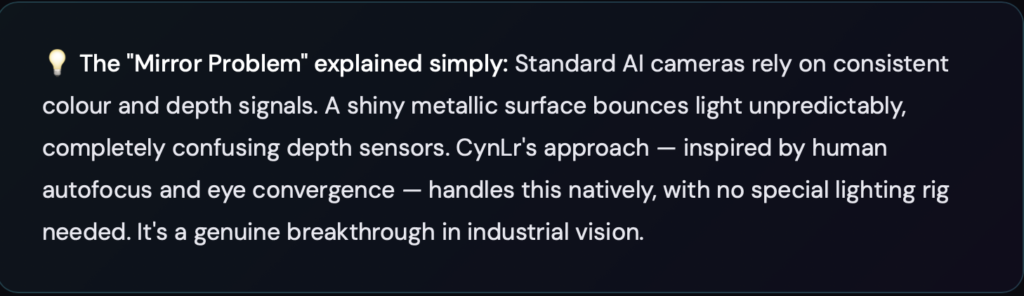

This device is the eyes. Auto-focus liquid lens optics, optical convergence, temporal imaging, hierarchical depth mapping, all packed into something roughly the size of your fist. And critically: it’s what lets Cyn;Lr’s robots look at a mirror-finished metal part — historically the nightmare of every machine vision system and still figure out exactly what it is and how to grab it.

The team casually mentioned the CLX captures data across 7 perceptual dimensions simultaneously. Human eyes use roughly the same number. That is not a coincidence.

🦾 The KUKA Setup

One corner of the lab featured a classic KUKA industrial arm with CynLr branding — a deliberate signal about how the OI platform is designed to be hardware-agnostic. You don’t throw away your existing robots. You upgrade their brain. That framing — not “replace your factory” but “make your current investment smarter” — is both smart product strategy and genuine empathy for manufacturers who can’t afford total overhauls.

🔧 The Mechanical Workshop

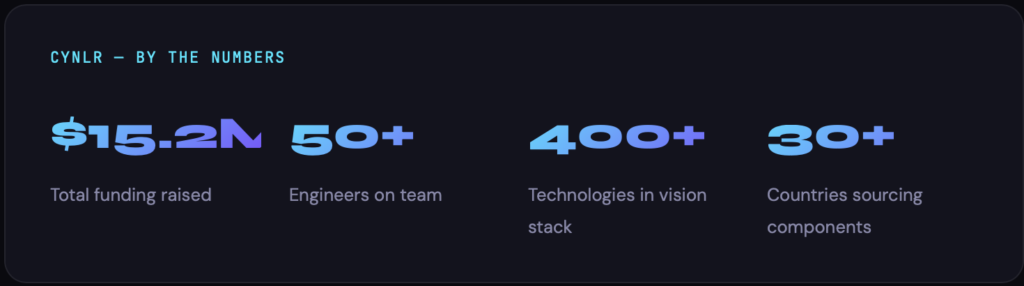

I got a glimpse of the mechanical workstation area — meticulously organized shelves, soldering stations, component drawers labeled with military precision. These people aren’t just writing code. They’re building physical hardware from scratch, sourcing over 400 technologies from 30+ countries to create something that genuinely doesn’t exist anywhere else on a shelf.

Why This Matters — Even If You’re Not In Robotics

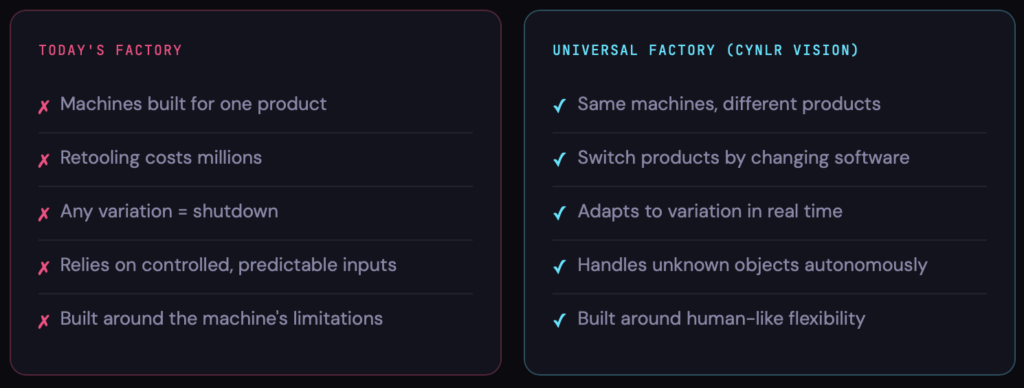

Here’s what I keep coming back to. This isn’t just a cool robotics demo. CynLr is going after something called the”Universal Factory”— and if they pull it off, it changes manufacturing at a civilizational scale.

- Machines built for one product

- Retooling costs millions

- Any variation = shutdown

- Relies on controlled, predictable inputs

- Built around the machine’s limitations

- Same machines, different products

- Switch products by changing software

- Adapts to variation in real time

- Handles unknown objects autonomously

- Built around human-like flexibility

Imagine the same factory floor making EV components today, retooled by software overnight to make medical device parts tomorrow. No new machines, no weeks of reconfiguration. That’s the vision. And the key to unlocking it? Giving robots the ability tosee and understand objects the way we do it without needing months of retraining every time something changes.

The robotics market was valued at$48 billion in 2019. Of that,$32 billion went purely into customisation, adapting rigid robots to specific tasks. CynLr is going after that $32 billion. Not with brute force, but with intelligence. The numbers alone tell you the size of the opportunity.

An Indian Company Doing This. Right Here. In Bengaluru.

I want to pause here because this deserves its own moment of appreciation.

CynLr is not a branch office. Not a service center for a foreign IP. This is original deep technology, conceived and built in India, for the global manufacturing industry. Their core R&D is right here in Bengaluru. They’ve recently opened a design center in Switzerland (near EPFL — one of the world’s top robotics research institutions), and a business development office in the US. The technology direction flowsoutward from India.

They’ve been named a 2025 Technology Pioneer by the World Economic Forum— the same recognition given to 39 global innovators shaping the future of industry. They were invited to present at the United Nations AI for Good Global Summit. They’re collaborating with IISc on frontier neuroscience research.

My Honest Take

I went in knowing a little about CynLr. I came out genuinely humbled. There’s something about standing in a room full of moving robots, watching an engineer nudge code on a laptop while a robotic arm four feet away adjusts its grip in real time, that makes the abstract feel very, very concrete.

The phrase on the team’s t-shirts —”Sentience Before Intelligence”— is not just branding. It’s a philosophy that pushes back on the prevailing AI orthodoxy. The argument CynLr is making is that you don’t need a robot to be “smarter” in the large-language-model sense. You need it to perceive better first. Give it real sensory awareness of the world, and the intelligence follows naturally. That’s actually how biology works too. Babies don’t learn to think before they learn to see.

What impressed me most wasn’t the flashiest robot on the floor. It was the culture of rigour in that room. The organized component shelves. The simulation environments running in parallel with physical arms. The multiple generations of hardware under glass. The soldering station next to the KUKA arm. This is a team that doesn’t skip steps — and in deep tech, that discipline is everything.

“This is what finally makes robotics useful in the real world, where nothing stays the same twice.”— Gokul NA, on the Object Intelligence platform launch, Feb 12, 2026

If you’re in tech whether you build software, manage IT infrastructure, run teams, or just care about where Indian technology is heading ,keep a close eye on CynLr. This is not a startup building the 47th productivity chatbot. This is foundational technology, built patiently and seriously, for an industry the entire world depends on.

Key Takeaways

- CynLr’s OI Stack is not a product — it’s a new layer of robotics intelligence, like an OS for robot perception

- The CLX camera solves the “shiny object problem” that has defeated machine vision for decades

- Robots learn a new task in 10–15 seconds vs weeks of traditional retraining

- Hardware-agnostic — works on KUKA, Doosan, Flexiv and other existing arms

- IISc collaboration brings real neuroscience into the codebase, not just as inspiration

- India is not catching up in robotics — CynLr is leading from the front

- The Universal Factory vision: one floor, infinite products, zero retooling

I’m grateful to have been in that room on launch day. And I’ll definitely be back. Special thanks for the CynLR team to show us their vision of robotics , Object Intelligence + the awesome demo’s it was an electrifying experience

Feel free to share | comment or like this post , let’s pass on the excitement and learning to the world