Introduction

Your pod is stuck. The deployment rolled out successfully, but containers refuse to start. You run kubectl get pods and see that dreaded CreateContainerConfigError status.

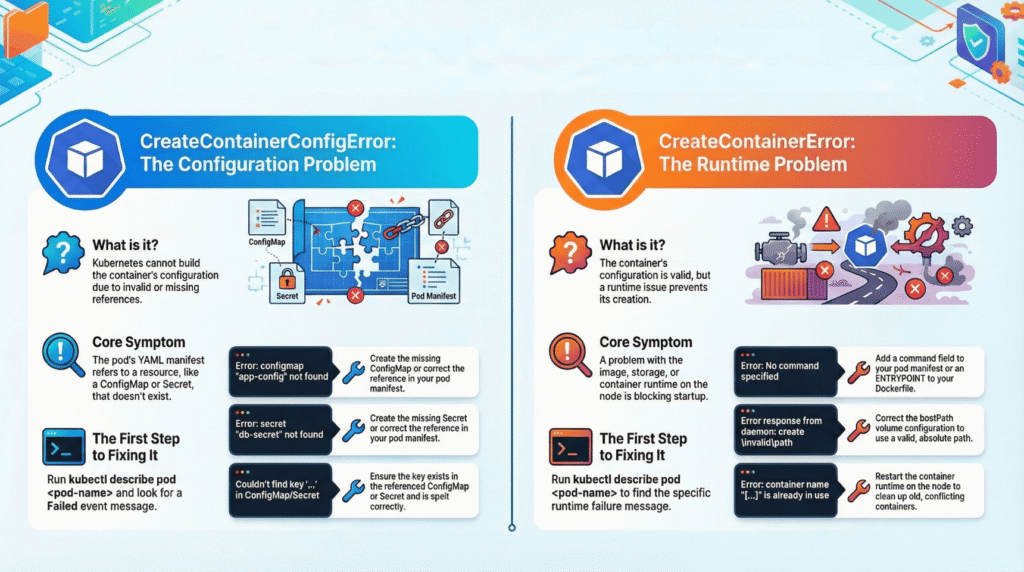

CreateContainerConfigError occurs when Kubernetes cannot build the container configuration before launching the process. This happens during the pre-start phase—the container runtime never executes your application code. Your app logs? Empty. The issue lies in how Kubernetes assembles configuration data from ConfigMaps, Secrets, or volume mounts.

Key Distinction: This isn’t a runtime crash. The container never started, so traditional debugging (checking logs, exec into pod) won’t work.

Error Flow Visualization

graph TD

A[Pod Creation Request] --> B{Configuration Valid?}

B -->|No| C[CreateContainerConfigError]

B -->|Yes| D[Container Runtime]

D --> E{Runtime Success?}

E -->|No| F[CreateContainerError]

E -->|Yes| G[Running Pod]

C --> H[Check ConfigMaps]

C --> I[Check Secrets]

C --> J[Check Volume Mounts]

C --> K[Check RBAC]Common Causes

1. Missing ConfigMap Reference

# deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: web-app

spec:

replicas: 1

selector:

matchLabels:

app: web

template:

metadata:

labels:

app: web

spec:

containers:

- name: nginx

image: nginx:1.21

envFrom:

- configMapRef:

name: app-config # ❌ This ConfigMap doesn't exist

Error Output:

kubectl describe pod web-app-xyz

Events:

Warning Failed CreateContainerConfigError

configmap "app-config" not found

2. Secret Key Mismatch

# pod-with-secret.yaml

apiVersion: v1

kind: Pod

metadata:

name: database-client

spec:

containers:

- name: psql

image: postgres:14

env:

- name: DB_PASSWORD

valueFrom:

secretKeyRef:

name: db-credentials

key: password # ❌ Actual key is 'db-password'

Verification Commands:

# Check if Secret exists

kubectl get secret db-credentials -o json | jq '.data | keys'

# Output shows actual keys

[

"db-password",

"username"

]

3. Volume Mount Configuration Issue

# deployment-with-volume.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: config-reader

spec:

template:

spec:

containers:

- name: app

image: busybox

command: ['sh', '-c', 'cat /config/settings.json']

volumeMounts:

- name: config-volume

mountPath: /config

subPath: app-settings.json # ❌ subPath doesn't exist in ConfigMap

volumes:

- name: config-volume

configMap:

name: application-config

Troubleshooting Decision Tree

flowchart TD

Start[CreateContainerConfigError] --> Describe[kubectl describe pod]

Describe --> CheckEvents{Events Show?}

CheckEvents -->|ConfigMap not found| VerifyCM[kubectl get configmap]

CheckEvents -->|Secret not found| VerifySecret[kubectl get secret]

CheckEvents -->|Key reference failed| CheckKeys[Verify key names]

CheckEvents -->|Mount failed| CheckVolumes[Inspect volumeMounts]

VerifyCM --> CMExists{Exists?}

CMExists -->|No| CreateCM[Create ConfigMap]

CMExists -->|Yes| CheckNamespace[Wrong namespace?]

VerifySecret --> SecretExists{Exists?}

SecretExists -->|No| CreateSecret[Create Secret]

SecretExists -->|Yes| CheckKeyName[Verify key in Secret]

CheckKeys --> FixManifest[Update pod spec]

CheckVolumes --> FixVolume[Correct mount path/subPath]

CreateCM --> Restart[Delete pod or rollout restart]

CreateSecret --> Restart

FixManifest --> Restart

FixVolume --> Restart

CheckNamespace --> Restart

CheckKeyName --> Restart

Step-by-Step Resolution

Step 1: Identify the Error

# Check pod status

kubectl get pods -n production

NAME READY STATUS RESTARTS AGE

web-app-7d9c8b-xyz 0/1 CreateContainerConfigError 0 2m

Step 2: Extract Event Details

# Get detailed pod information

kubectl describe pod web-app-7d9c8b-xyz -n production

Critical Section – Events:

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 3m default-scheduler Successfully assigned production/web-app-7d9c8b-xyz to node-1

Warning Failed 2m (x5 over 3m) kubelet Error: configmap "app-config" not found

Step 3: Audit Configuration Resources

Comprehensive Check Script:

#!/bin/bash

# troubleshoot-pod.sh

POD_NAME=$1

NAMESPACE=${2:-default}

echo "=== Pod Status ==="

kubectl get pod $POD_NAME -n $NAMESPACE

echo -e "\n=== Events ==="

kubectl describe pod $POD_NAME -n $NAMESPACE | grep -A 20 "Events:"

echo -e "\n=== Referenced ConfigMaps ==="

kubectl get pod $POD_NAME -n $NAMESPACE -o json | \

jq -r '.spec.volumes[]?.configMap.name // empty' | \

while read cm; do

echo "Checking ConfigMap: $cm"

kubectl get configmap $cm -n $NAMESPACE 2>&1

done

echo -e "\n=== Referenced Secrets ==="

kubectl get pod $POD_NAME -n $NAMESPACE -o json | \

jq -r '.spec.volumes[]?.secret.secretName // empty' | \

while read secret; do

echo "Checking Secret: $secret"

kubectl get secret $secret -n $NAMESPACE 2>&1

done

echo -e "\n=== Environment Variables from ConfigMaps ==="

kubectl get pod $POD_NAME -n $NAMESPACE -o json | \

jq -r '.spec.containers[].envFrom[]?.configMapRef.name // empty'

echo -e "\n=== Environment Variables from Secrets ==="

kubectl get pod $POD_NAME -n $NAMESPACE -o json | \

jq -r '.spec.containers[].envFrom[]?.secretKeyRef.name // empty'

Usage:

chmod +x troubleshoot-pod.sh

./troubleshoot-pod.sh web-app-7d9c8b-xyz production

Fix Scenarios

Scenario 1: Create Missing ConfigMap

# Quick inline creation

kubectl create configmap app-config \

--from-literal=API_URL=https://api.example.com \

--from-literal=LOG_LEVEL=info \

-n production

Or from file:

# config.properties

API_URL=https://api.example.com

LOG_LEVEL=info

MAX_CONNECTIONS=100

# Create from file

kubectl create configmap app-config \

--from-file=config.properties \

-n production

Declarative approach:

# configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: app-config

namespace: production

data:

API_URL: "https://api.example.com"

LOG_LEVEL: "info"

config.json: |

{

"database": {

"host": "db.internal",

"port": 5432

},

"cache": {

"ttl": 3600

}

}

kubectl apply -f configmap.yaml

Scenario 2: Fix Secret Key Reference

Current broken configuration:

env:

- name: DB_PASSWORD

valueFrom:

secretKeyRef:

name: db-credentials

key: password # ❌ Wrong key

Check actual keys:

kubectl get secret db-credentials -o jsonpath='{.data}' | jq 'keys'

# Output: ["db-password", "username"]

Corrected configuration:

env:

- name: DB_PASSWORD

valueFrom:

secretKeyRef:

name: db-credentials

key: db-password # ✅ Correct key

Apply fix:

kubectl patch deployment web-app -n production --type='json' \

-p='[{"op": "replace", "path": "/spec/template/spec/containers/0/env/0/valueFrom/secretKeyRef/key", "value":"db-password"}]'

Scenario 3: Volume Mount SubPath Issue

Problematic manifest:

volumeMounts:

- name: config-volume

mountPath: /app/config

subPath: application.conf # ❌ File doesn't exist in ConfigMap

volumes:

- name: config-volume

configMap:

name: app-settings

Check ConfigMap contents:

kubectl get configmap app-settings -o json | jq '.data | keys'

# Output: ["app.conf", "database.conf"]

Fix options:

Option A – Remove subPath:

volumeMounts:

- name: config-volume

mountPath: /app/config

# Mount entire ConfigMap

Option B – Use correct filename:

volumeMounts:

- name: config-volume

mountPath: /app/config/application.conf

subPath: app.conf # ✅ Matches actual key

Scenario 4: RBAC Permission Issues

Error message:

Error: secrets "api-keys" is forbidden:

User "system:serviceaccount:production:default" cannot get resource "secrets"

Grant access:

# rbac-fix.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: app-service-account

namespace: production

---

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

name: secret-reader

namespace: production

rules:

- apiGroups: [""]

resources: ["secrets", "configmaps"]

verbs: ["get", "list"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: read-secrets

namespace: production

subjects:

- kind: ServiceAccount

name: app-service-account

namespace: production

roleRef:

kind: Role

name: secret-reader

apiGroup: rbac.authorization.k8s.io

Update Deployment to use ServiceAccount:

spec:

template:

spec:

serviceAccountName: app-service-account

containers:

- name: app

image: myapp:1.0

kubectl apply -f rbac-fix.yaml

kubectl rollout restart deployment web-app -n production

Error Comparison Matrix

graph LR

A[Pod Start Process] --> B{Config Assembly}

B -->|Failed| C[CreateContainerConfigError]

B -->|Success| D{Runtime Creation}

D -->|Failed| E[CreateContainerError]

D -->|Success| F{App Execution}

F -->|Failed| G[CrashLoopBackOff]

style C fill:#ff6b6b

style E fill:#ffa94d

style G fill:#ffd43b

style F fill:#51cf66| Error Type | Phase | Container Started? | Check Logs? | Common Causes |

|---|---|---|---|---|

| CreateContainerConfigError | Pre-start | ❌ No | ❌ Useless | Missing ConfigMap/Secret, key mismatch, volume mount error |

| CreateContainerError | Runtime init | ❌ No | ❌ Empty | Invalid command, image pull failure, resource limits |

| CrashLoopBackOff | Post-start | ✅ Yes | ✅ Check | Application crash, missing dependencies, port conflicts |

Automated Detection Script

#!/bin/bash

# detect-config-errors.sh

NAMESPACE=${1:-default}

echo "Scanning for CreateContainerConfigError in namespace: $NAMESPACE"

echo "================================================================"

kubectl get pods -n $NAMESPACE --field-selector=status.phase!=Running,status.phase!=Succeeded -o json | \

jq -r '.items[] |

select(.status.containerStatuses != null) |

select(.status.containerStatuses[].state.waiting.reason == "CreateContainerConfigError") |

{

pod: .metadata.name,

namespace: .metadata.namespace,

containers: [.status.containerStatuses[].name],

message: .status.containerStatuses[].state.waiting.message

}' | \

jq -s '.' | \

jq -r '.[] | "Pod: \(.pod)\nContainers: \(.containers | join(", "))\nError: \(.message)\n---"'

echo ""

echo "Detailed Troubleshooting:"

echo "========================="

kubectl get pods -n $NAMESPACE --field-selector=status.phase!=Running -o name | \

while read pod; do

POD_NAME=$(echo $pod | cut -d'/' -f2)

ERROR_STATUS=$(kubectl get $pod -n $NAMESPACE -o jsonpath='{.status.containerStatuses[*].state.waiting.reason}')

if [[ $ERROR_STATUS == *"CreateContainerConfigError"* ]]; then

echo ""

echo "🔍 Analyzing: $POD_NAME"

echo "Events:"

kubectl describe pod $POD_NAME -n $NAMESPACE | grep -A 10 "Events:" | grep -E "(ConfigMap|Secret|not found|forbidden)"

fi

done

Prevention Strategies

1. Pre-Deployment Validation

#!/bin/bash

# validate-resources.sh

MANIFEST=$1

NAMESPACE=${2:-default}

echo "Validating manifest: $MANIFEST"

# Extract ConfigMap references

CONFIGMAPS=$(yq eval '.spec.template.spec.volumes[].configMap.name // empty' $MANIFEST 2>/dev/null)

for cm in $CONFIGMAPS; do

if kubectl get configmap $cm -n $NAMESPACE &>/dev/null; then

echo "✅ ConfigMap $cm exists"

else

echo "❌ ConfigMap $cm NOT FOUND"

exit 1

fi

done

# Extract Secret references

SECRETS=$(yq eval '.spec.template.spec.volumes[].secret.secretName // empty' $MANIFEST 2>/dev/null)

for secret in $SECRETS; do

if kubectl get secret $secret -n $NAMESPACE &>/dev/null; then

echo "✅ Secret $secret exists"

else

echo "❌ Secret $secret NOT FOUND"

exit 1

fi

done

echo "✅ All resources validated"

Usage in CI/CD:

./validate-resources.sh deployment.yaml production && \

kubectl apply -f deployment.yaml

2. Helm Chart Dependencies

# Chart.yaml

apiVersion: v2

name: web-application

version: 1.0.0

dependencies:

- name: common-config

version: "1.x.x"

repository: "@company-repo"

# values.yaml

configMaps:

create: true

data:

API_URL: "https://api.example.com"

LOG_LEVEL: "info"

secrets:

create: true

data:

DB_PASSWORD: "{{ .Values.database.password }}"

# templates/deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: {{ .Release.Name }}

spec:

template:

spec:

containers:

- name: app

image: {{ .Values.image.repository }}:{{ .Values.image.tag }}

envFrom:

- configMapRef:

name: {{ .Release.Name }}-config

{{- if .Values.secrets.create }}

- secretRef:

name: {{ .Release.Name }}-secrets

{{- end }}

3. Kustomize Overlay Strategy

├── base/

│ ├── deployment.yaml

│ ├── configmap.yaml

│ ├── secret.yaml

│ └── kustomization.yaml

└── overlays/

├── dev/

│ ├── configmap.yaml

│ └── kustomization.yaml

└── prod/

├── configmap.yaml

└── kustomization.yaml

base/kustomization.yaml:

apiVersion: kustomize.config.k8s.io/v1beta1

kind: Kustomization

resources:

- deployment.yaml

- configmap.yaml

- secret.yaml

configMapGenerator:

- name: app-config

literals:

- APP_ENV=base

overlays/prod/kustomization.yaml:

apiVersion: kustomize.config.k8s.io/v1beta1

kind: Kustomization

bases:

- ../../base

configMapGenerator:

- name: app-config

behavior: merge

literals:

- APP_ENV=production

- API_URL=https://api.prod.example.com

# Deploy with guaranteed resource creation

kustomize build overlays/prod | kubectl apply -f -

4. Admission Controller Validation

# validating-webhook.yaml

apiVersion: admissionregistration.k8s.io/v1

kind: ValidatingWebhookConfiguration

metadata:

name: config-validator

webhooks:

- name: validate-config-refs.example.com

admissionReviewVersions: ["v1"]

clientConfig:

service:

name: validation-service

namespace: kube-system

path: "/validate"

rules:

- operations: ["CREATE", "UPDATE"]

apiGroups: ["apps"]

apiVersions: ["v1"]

resources: ["deployments", "statefulsets"]

failurePolicy: Fail

sideEffects: None

Validation logic (Go example):

// webhook-validator.go

func validateConfigReferences(pod *corev1.Pod) error {

client := kubernetes.NewForConfigOrDie(config)

for _, volume := range pod.Spec.Volumes {

if volume.ConfigMap != nil {

_, err := client.CoreV1().ConfigMaps(pod.Namespace).

Get(context.TODO(), volume.ConfigMap.Name, metav1.GetOptions{})

if err != nil {

return fmt.Errorf("ConfigMap %s not found", volume.ConfigMap.Name)

}

}

if volume.Secret != nil {

_, err := client.CoreV1().Secrets(pod.Namespace).

Get(context.TODO(), volume.Secret.SecretName, metav1.GetOptions{})

if err != nil {

return fmt.Errorf("Secret %s not found", volume.Secret.SecretName)

}

}

}

return nil

}

Quick Reference Commands

# Diagnose

kubectl get pods | grep CreateContainerConfigError

kubectl describe pod <pod-name> | grep -A 20 Events

# Verify resources

kubectl get configmap <name> -o yaml

kubectl get secret <name> -o jsonpath='{.data}' | jq 'keys'

# Check keys in ConfigMap

kubectl get configmap <name> -o json | jq '.data | keys'

# Check keys in Secret (base64 decoded)

kubectl get secret <name> -o json | jq -r '.data | to_entries[] | .key'

# Force pod recreation

kubectl delete pod <pod-name>

kubectl rollout restart deployment <deployment-name>

# Watch pod recovery

kubectl get pods -w

# Export pod spec for analysis

kubectl get pod <pod-name> -o yaml > debug-pod.yaml

Advanced Debugging Technique

#!/bin/bash

# deep-inspect.sh

POD=$1

NAMESPACE=${2:-default}

echo "=== Container Configuration Assembly ==="

kubectl get pod $POD -n $NAMESPACE -o json | jq '{

configMaps: [

.spec.volumes[]? | select(.configMap != null) |

{

name: .configMap.name,

mountedAs: .name,

optional: .configMap.optional

}

],

secrets: [

.spec.volumes[]? | select(.secret != null) |

{

name: .secret.secretName,

mountedAs: .name,

optional: .secret.optional

}

],

envFromConfigMaps: [

.spec.containers[].envFrom[]? | select(.configMapRef != null) |

.configMapRef.name

],

envFromSecrets: [

.spec.containers[].envFrom[]? | select(.secretRef != null) |

.secretRef.name

],

envConfigMapKeys: [

.spec.containers[].env[]? | select(.valueFrom.configMapKeyRef != null) |

{

envVar: .name,

configMap: .valueFrom.configMapKeyRef.name,

key: .valueFrom.configMapKeyRef.key

}

],

envSecretKeys: [

.spec.containers[].env[]? | select(.valueFrom.secretKeyRef != null) |

{

envVar: .name,

secret: .valueFrom.secretKeyRef.name,

key: .valueFrom.secretKeyRef.key

}

]

}'

echo ""

echo "=== Cross-Reference Check ==="

# Check each referenced ConfigMap

kubectl get pod $POD -n $NAMESPACE -o json | \

jq -r '.spec.volumes[]?.configMap.name // empty' | sort -u | \

while read cm; do

if kubectl get configmap $cm -n $NAMESPACE &>/dev/null; then

echo "✅ ConfigMap: $cm"

else

echo "❌ ConfigMap: $cm (NOT FOUND)"

fi

done

# Check each referenced Secret

kubectl get pod $POD -n $NAMESPACE -o json | \

jq -r '.spec.volumes[]?.secret.secretName // empty' | sort -u | \

while read secret; do

if kubectl get secret $secret -n $NAMESPACE &>/dev/null; then

echo "✅ Secret: $secret"

else

echo "❌ Secret: $secret (NOT FOUND)"

fi

done

Conclusion

CreateContainerConfigError is a pre-start configuration assembly failure, not an application bug. The container never executed, making traditional debugging methods ineffective.

Resolution checklist:

- ✅ Run

kubectl describe pod <name>– never start with logs - ✅ Verify ConfigMaps/Secrets exist:

kubectl get configmap/secret - ✅ Check key names match exactly

- ✅ Validate RBAC permissions for ServiceAccount

- ✅ Recreate pod after fixing:

kubectl delete podorkubectl rollout restart

Prevention:

- Validate manifests before deployment (

--dry-run=client) - Use Helm/Kustomize to manage dependencies

- Implement admission controllers for automated validation

- Create CI/CD checks for resource references

When this error appears, check describe output immediately—the Events section contains the exact cause. Fix the configuration synchronization issue, recreate the pod, and your containers will start successfully.